In 1999 when NVIDIA launched NVIDIA GeForce 256 because the world’s first GPU, Jensen Huang, its founder and CEO, would by no means foresee what two transformational waves of AI, from deep studying to generative AI (genAI), may carry to the paramount achievements being made these days. On March 18, 2024, when NVIDIA’s GPU Know-how Convention (GTC) for AI {hardware} and software program builders returned to actual life, in particular person, after 5 years, NVIDIA most likely by no means anticipated to see even greater enthusiasm from the ecosystem of builders, researchers, and enterprise strategists. Trade analysts together with myself needed to look ahead to about an hour to get into the SAP Middle, and even being invited into the keynote with reserved seats, we weren’t in a position to make our approach into the world, because it was simply too crowded and our seats weren’t obtainable anymore.

However after watching the keynote at GTC 2024, I’m very certain about one factor: This occasion, along with the extraordinary efforts made by all know-how pioneers of enterprises, distributors, and academia worldwide, is unveiling a brand new chapter within the AI period, representing the start of a major leap within the AI revolution. With the most important bulletins on this occasion spanning AI {hardware} infrastructure, a next-gen AI-native platform, and enabling AI software program throughout consultant software eventualities, NVIDIA paves the best way to an enterprise AI-native basis.

AI Infrastructure: Constructing On Its Spectacular Lead

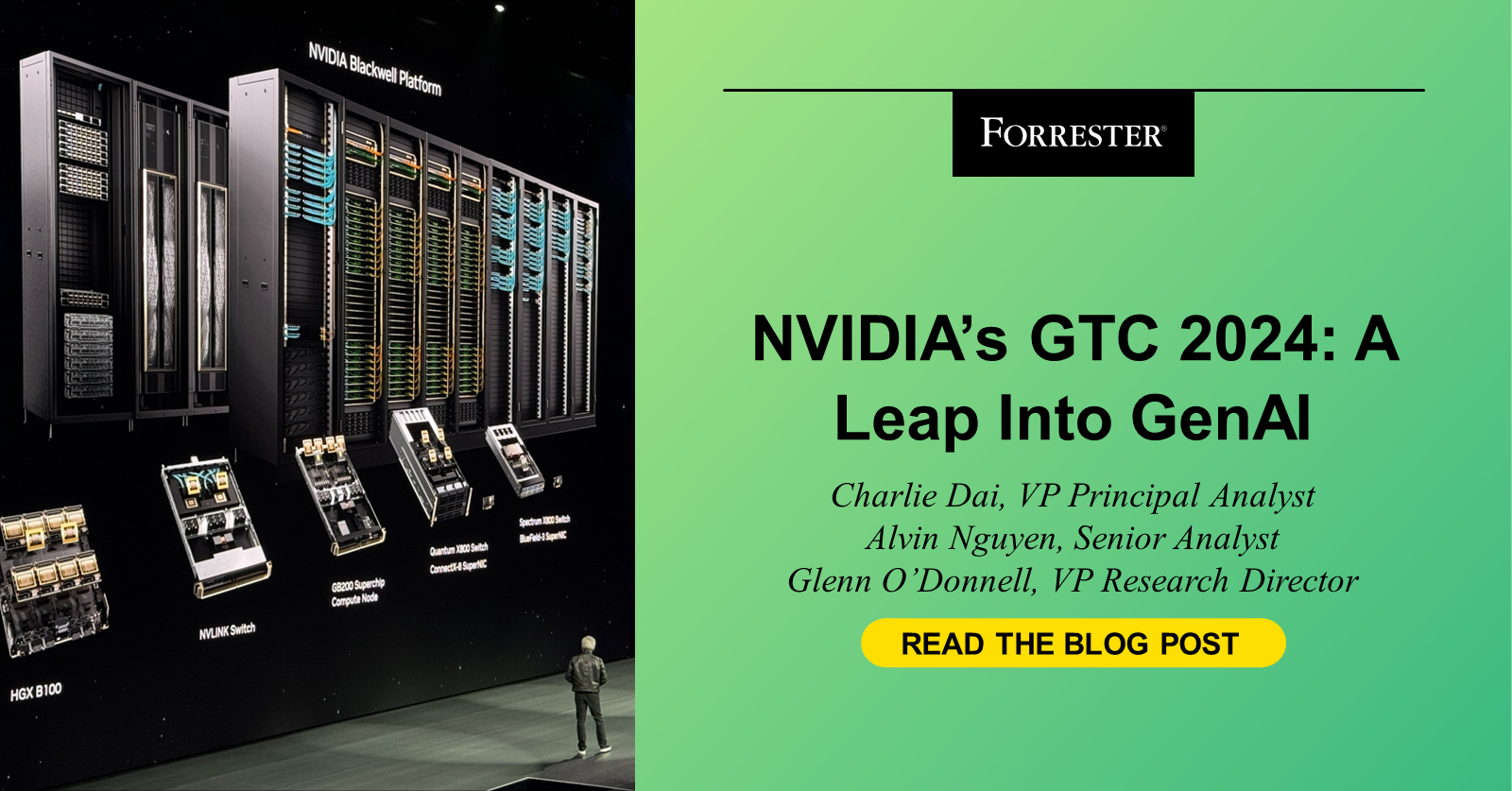

NVIDIA introduced just a few breakthroughs in AI infrastructure. Some consultant new merchandise embrace:

- Blackwell GPU collection. The extremely anticipated Blackwell GPU is the successor to NVIDIA’s already extremely coveted H100 and H200 GPUs, turning into the world’s strongest chip for AI workloads. NVIDIA additionally introduced the mix of two Blackwell GPUs with NVIDIA’s Grace CPU to create its GB200 Superchip. This setup is claimed to supply as much as a 30x efficiency enhance in comparison with the H100 GPU for giant language mannequin (LLM) inference workloads with as much as 25x better energy effectivity.

- DGX GB200 System. Every DGX GB200 system options 36 NVIDIA GB200 Superchips — which embrace 36 NVIDIA Grace CPUs and 72 NVIDIA Blackwell GPUs — linked as one supercomputer by way of fifth-generation NVIDIA NVLink. The GB200 Superchips ship as much as a 30x efficiency enhance in comparison with the NVIDIA H100 Tensor Core GPU for LLM inference workloads.

- DGX SuperPOD. The Grace Blackwell-powered DGX SuperPOD options eight or extra DGX GB200 methods and may scale to tens of 1000’s of GB200 Superchips linked by way of NVIDIA Quantum InfiniBand. For a large shared reminiscence area to energy next-generation AI fashions, clients can deploy a configuration that connects the 576 Blackwell GPUs in eight DGX GB200 methods linked by way of NVLink.

What it means: On one hand, these new merchandise signify a major leap ahead in AI computing energy and power effectivity. As soon as deployed to manufacturing, they won’t solely considerably enhance efficiency for each coaching and inferencing, enabling researchers and builders to sort out beforehand unimaginable issues, however they may also tackle buyer considerations about energy consumption. However, it additionally implies that for current clients of H100 and H200, the enterprise benefits of their investments will develop into a limitation in comparison with its B-series. It additionally implies that the tech distributors that needed to generate income by reselling AI computing energy should determine how to make sure ROI.

Enterprise AI Software program & Apps: The Subsequent Frontier

NVIDIA already constructed an built-in software program kingdom round CUDA, and it’s strategically constructing the capabilities of its enterprise AI portfolio, taking a cloud-native method by leveraging Kubernetes, containers, and microservices with a distributed structure. This yr, with its newest achievements in AI software program and purposes, I’m calling it “genAI-native” — natively constructed for genAI improvement eventualities throughout coaching and inferencing and natively optimized for genAI {hardware}. Some consultant ones are as follows:

- NVIDIA NIM to speed up AI mannequin inferencing via prebuilt containers. NVIDIA NIM is a set of optimized cloud-native microservices designed to simplify deployment of genAI fashions wherever. It may be thought of as an built-in inferencing platform throughout six layers: prebuilt container and Helm charts, industry-standard APIs, domain-specific code, optimized inference engines (e.g., Triton Inference Server™ and TensorRT™-LLM), and help for customized fashions, all primarily based on NVIDIA AI Enterprise runtime. This abstraction will present a streamlined path for growing AI-powered enterprise purposes and deploying AI fashions in manufacturing.

- Choices to advance improvement in transportation and healthcare. For transportation, NVIDIA introduced that BYD, Hyper, and XPENG have adopted the NVIDIA DRIVE Thor™ centralized automobile pc to energy next-generation client and business fleets. And for healthcare, NVIDIA introduced greater than two dozen new microservices for superior imaging, pure language and speech recognition, and digital biology technology, prediction and simulation.

- Choices to facilitate innovation in humanoids, 6G, and quantum. For humanoids, NVIDIA introduced Mission GR00T, a general-purpose basis mannequin for humanoid robots, to additional drive improvement in robotics and embodied AI. For telcos, it unveiled a 6G analysis cloud platform to advance AI for radio entry community (RAN), consisting of NVIDIA Aerial Omniverse Digital Twin for 6G, NVIDIA Aerial CUDA-Accelerated RAN, and NVIDIA Sionna Neural Radio Framework. And for quantum computing, it launched a quantum simulation platform with a generative quantum eigensolver powered by an LLM to seek out the ground-state power of a molecule extra shortly and a QC Ware Promethium to sort out advanced quantum chemistry issues resembling molecular simulation.

- Expanded partnerships with all main hyperscalers, besides Alibaba Cloud. Along with the help of compute situations for the most recent chipsets on main hyperscalers, NVIDIA can be increasing partnerships in varied domains to speed up digital transformation. For instance, for AWS, Amazon SageMaker will present integration with NVIDIA NIM to additional optimize value efficiency of basis fashions working on GPUs, with extra collaboration on healthcare. And NVIDIA NIM can be coming to Azure AI, Google Cloud, and Oracle Cloud for AI deployments, with extra initiatives on healthcare, industrial design, and sovereignty with AWS, Google, and Oracle respectively.

What it means: NVIDIA has develop into a aggressive software program supplier within the enterprise area, particularly in areas which can be related to genAI. Its benefit in AI {hardware} infrastructure has nice potential to affect software structure and the aggressive panorama. Nevertheless, enterprise decision-makers must also understand that its core power continues to be within the {hardware}, missing experiences and enterprise solutioning capabilities in advanced enterprise software program enterprise environments. And its availability of AI software program (and in addition {hardware} beneath) varies throughout geographic areas, limiting its regional capabilities to serve native purchasers.

Wanting Forward

Jensen and all different NVIDIA executives have been attempting laborious to persuade their purchasers previously years that NVIDIA just isn’t a GPU firm anymore. I’d say that this mission is completed as of right this moment. In different phrases, GPU just isn’t what we expect it’s anymore, however two issues are all the time the identical: being obsessive about buyer wants and being targeted on the IT that drives excessive enterprise efficiency. Enterprise decision-makers ought to keep watch over NVIDIA’s product roadmap, taking a practical method to show daring imaginative and prescient into superior efficiency.

In fact, that is solely a fraction of the bulletins at NVIDIA GTC 2024. For extra perspective from us (Charlie Dai, Alvin Nguyen, and Glenn O’Donnell) or every other Forrester analyst, e book an inquiry or steerage session at inquiry@forrester.com.